Ok, this is not a blog post. It’s a bit of a rant. But also one borne out of how chaotic this industry has become. Every org is building an ‘observability’ platform. Touche 😜. Because, hey, the dire situation in engineering corridors. Frustrated engineers, spiraling costs, terrible productivity, and the endless fatigue of doing this repeatedly.

But, also, hey, there’s a lot of money on the table. LOTS. And the land grab on observability reminds me of how you can create apes and get everyone to rally around it. But that's a story for another day.

In this adjective-finding industry of ‘reliability’ tooling, buzzwords are commonplace. The one that's steadily picking up; is observability. Dime and a dozen players. Even the APM folks are now morphing into ‘observability’-first orgs. Everyone’s observable, and yet, no one is reliable. Long live observability, death to observability.

Here’s a simple truth: Building a reliability framework starts with engineering teams writing clean code, paying attention to 'observability-as-code', and getting basic engineering practices sorted.

No tool can magically offer you five 9s. This is not about the vendor you choose or build. Instead, you start from basic org nomenclature to discard unnecessary data and nipping tribal knowledge. These are the basics. And the basics are boring. Boring is hard. No one wants to talk boring, because... you know... boring. But boring is what makes you resilient and reliable. Boring is battle tested.

All this chatter about Artificial Intelligence Operations to the rescue, and mythical sentient dodos deciphering reliability is loads of hogwash.

So, here’s a simple, obvious, grassroots story of ground realities. It’s so trivial, it’s so basic, it should give you the topology of where things stand. Strap your seatbelts. This is part ugly, part hilarious, and tells you how things need to change structurally if you want better reliability.

Words have consequences — Nomenclature.

Product communication in engineering is integral to avoiding entropy. The words you choose and how you design an engineering org matter more than you think. The bottomless pit of Conway’s Law will manifest in your reliability charter if you don't act on it.

How?

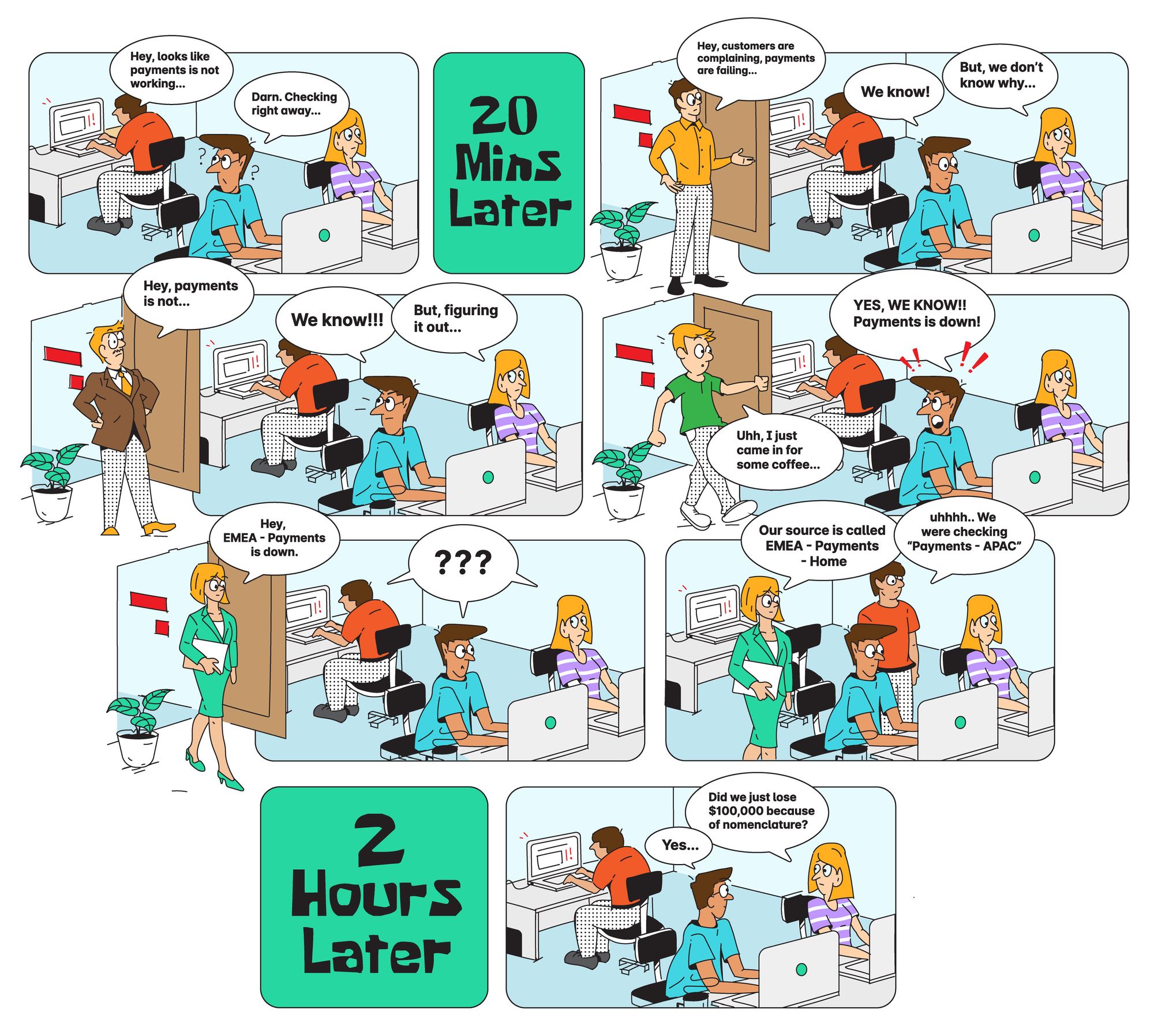

One of our large customers faced an outage. It lasted for about two hours or so. As always, folks were struggling to understand the root cause of the incident. After nearly 45 minutes, it turns out this was because of a break in nomenclature. 🤔

Team A has defined a service name as Payments-APAC. This particular service went down.

Team B realized the issue but was searching for EMEA-Payments-Home. Soon, Team C was alerted to failures, and they didn’t have a clue about the root cause. About 20 minutes in, Customer Success (Team D) is now bombarded with complaints. This adds stress to Team B and moves up the value chain all the way to Team A.

This all started because Team A’s nomenclature differed from how Team B perceived it. It’s so trivial yet so time-consuming. About six engineers, 2 PMs, 2 Customer Success folks, and a business head were all scrambling for information. Loss of productivity, time, effort... It all starts with the basics. Remember? Basics are boring. But... Boring is battle tested.

The lack of a common language for observability means you are flying blind.

Believe it or not, this simple break in naming prevented folks from discovering the root cause of the incident quickly. This was coupled with folks scrambling on who's responsible for the particular service, the corresponding dashboards, deployment access, and key people involved.

The complex realities of building a reliability mandate:

- You need willingness from engineers to do the dirty work no one wants to touch.

- You need engineering management to care about the data they ingest and how communication revolves around it.

- You need leaders who think of observability as code.

- You need ownership to tell management; this matters and that we will dedicate time to build this once and for all.

Without solid policy rules for nomenclature and verbiage around observable ‘entities,’ your war rooms will perpetually be chaotic. Your Mean Time To Detect (MTTD) will forever be high.

Observability is as observability does. Get down to the toil at the grassroots, and you’ll realize the practical aspects of building a solid reliability mandate.

Thank you for being so patient; all this pent-up frustration is jammed into this blog. If you’re still here and reading, maybe drop me a “This will get better soon, don’t worry, Aniket” on Twitter here: @aniket_rao.

Also, here’s a promise. We’re doing this differently in the observability space. Trying hard, given the challenges around how much customer education is needed. So, maybe, just maybe, check out last9.io? Or, ping me, and I can walk you through how we think of reliability. If not for nothing, I’d love to talk to folks who are equally frustrated about existing solutions. 😂😜