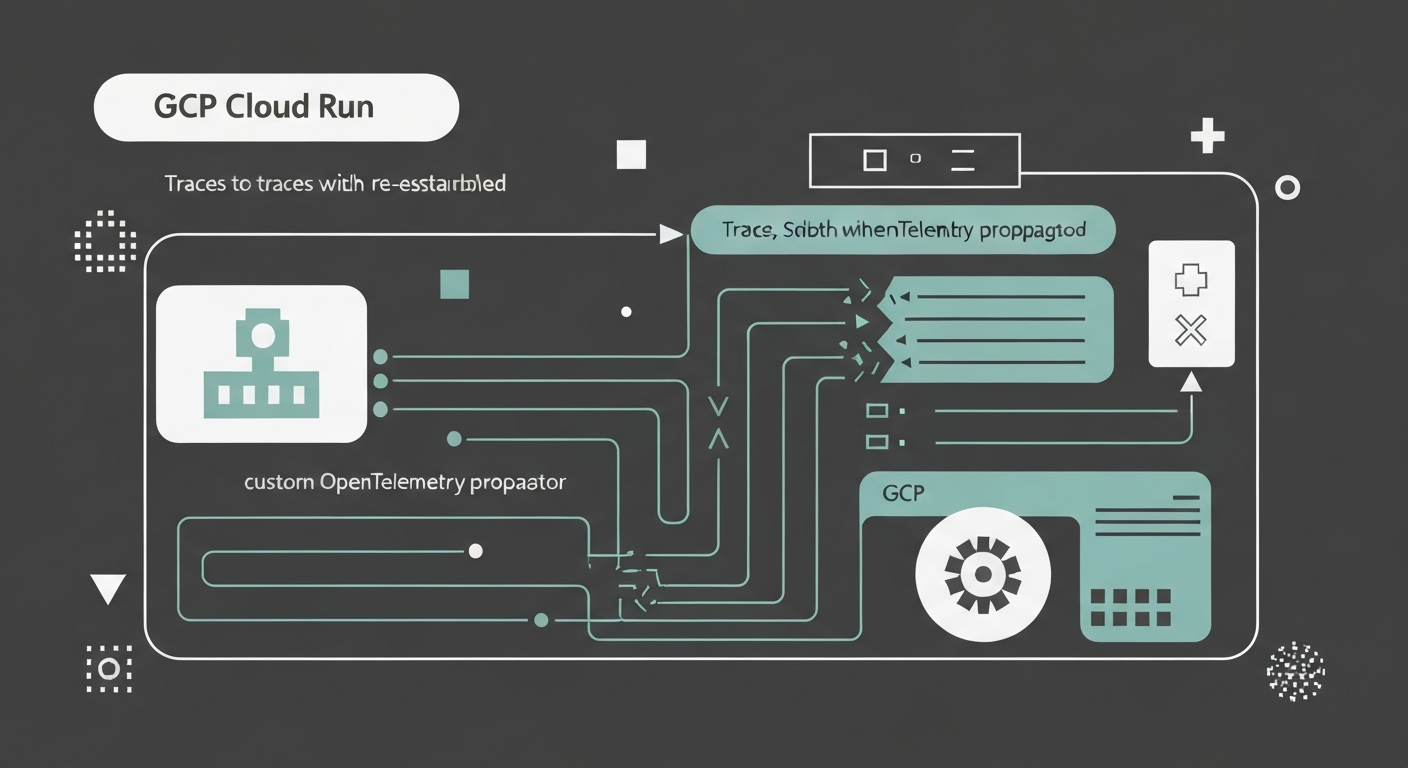

GCP's load balancer silently rewrites your traceparent header, orphaning spans in any OTLP backend. Here's the custom propagator that fixes it.

You open your trace in whatever backend you're using — Last9, Honeycomb, Datadog, doesn't matter — and something's off. Two services made a call. You see both spans. The TraceId matches. But they're sitting side by side instead of nested, like two strangers who happen to be at the same conference.

You pull the raw span data and find this:

{

"TraceId": "5719c8b38bb1416b61c403eb50518524",

"SpanId": "1dc99ec20ff6420c",

"ParentSpanId": "fc8c7a0328245590",

"ServiceName": "service-b"

}The ParentSpanId is there. It just references a span that was never exported. Somewhere between service-a and service-b, a span got created, used as a parent, and then disappeared.

That's GCP's load balancer.

What the Load Balancer Does

When a request moves between Cloud Run services, Google's load balancer intercepts it at the network layer. It reads the W3C traceparent header, creates its own span inside Google Cloud Trace, rewrites the traceparent to point to that new span, and forwards the modified request.

From the load balancer's perspective, this is correct behavior. Google Cloud Trace gets a complete picture of infrastructure-level routing. The problem is that your application only exports to your OTLP endpoint — not to Google Cloud Trace. So the load balancer span exists in Google's system, your service-b span correctly references it as a parent, and your backend has no idea what that parent is.

┌─────────────┐

│ service-a │ SpanId: abc123

│ │ Sends: traceparent: "00-{traceId}-abc123-01"

└──────┬──────┘

│

↓

┌──────────────────┐

│ GCP Load │ Creates SpanId: xyz789

│ Balancer │ Rewrites: traceparent: "00-{traceId}-xyz789-01"

└────────┬─────────┘

│ (xyz789 only goes to Google Cloud Trace — never your OTLP backend)

↓

┌─────────────┐

│ service-b │ ParentSpanId: xyz789 ← references a span you'll never see

└─────────────┘This isn't a bug in GCP. It's a deliberate integration with Google Cloud Trace. But if you're not using Google Cloud Trace as your primary backend, you pay the cost without the benefit.

The Fix: Backup Headers

The load balancer modifies W3C standard headers — traceparent and tracestate. It ignores everything else.

The solution is to copy the original traceparent into a custom header before the request leaves service-a. The load balancer rewrites traceparent but leaves x-original-traceparent untouched. When service-b receives the request, it reads the backup header instead of the modified one.

service-a injects:

traceparent: 00-{traceId}-abc123-01

x-original-traceparent: 00-{traceId}-abc123-01 ← backup

Load balancer rewrites:

traceparent: 00-{traceId}-xyz789-01 ← modified

x-original-traceparent: 00-{traceId}-abc123-01 ← untouched

service-b extracts from backup:

ParentSpanId: abc123 ← correctThis is the same pattern used in other environments where infrastructure proxies rewrite trace headers — AWS ALBs have the same behavior, as do some service meshes.

The CloudRunTracePropagator

Here's the full implementation. It wraps the standard W3C propagator and adds the backup header logic on top:

const { W3CTraceContextPropagator } = require('@opentelemetry/core');

class CloudRunTracePropagator {

constructor() {

this._w3cPropagator = new W3CTraceContextPropagator();

this._backupHeader = 'x-original-traceparent';

this._backupStateHeader = 'x-original-tracestate';

}

inject(context, carrier, setter) {

this._w3cPropagator.inject(context, carrier, setter);

const traceparent = carrier['traceparent'];

const tracestate = carrier['tracestate'];

if (traceparent) {

setter.set(carrier, this._backupHeader, traceparent);

}

if (tracestate) {

setter.set(carrier, this._backupStateHeader, tracestate);

}

}

extract(context, carrier, getter) {

const originalTraceparent = getter.get(carrier, this._backupHeader);

if (originalTraceparent) {

const originalCarrier = {

'traceparent': Array.isArray(originalTraceparent)

? originalTraceparent[0]

: originalTraceparent,

};

const originalTracestate = getter.get(carrier, this._backupStateHeader);

if (originalTracestate) {

originalCarrier['tracestate'] = Array.isArray(originalTracestate)

? originalTracestate[0]

: originalTracestate;

}

return this._w3cPropagator.extract(context, originalCarrier, {

get: (c, key) => c[key],

keys: (c) => Object.keys(c),

});

}

return this._w3cPropagator.extract(context, carrier, getter);

}

fields() {

return [

'traceparent',

'tracestate',

this._backupHeader,

this._backupStateHeader,

];

}

}The extract method checks for the backup header first. If it's there, it uses the original context. If not — external callers, services that haven't been updated yet — it falls back to standard W3C extraction. Nothing breaks during a partial rollout.

Wiring It Into the SDK

Pass it as textMapPropagator when initializing the Node SDK:

const { NodeSDK } = require('@opentelemetry/sdk-node');

const { OTLPTraceExporter } = require('@opentelemetry/exporter-trace-otlp-http');

const { Resource } = require('@opentelemetry/resources');

const { ATTR_SERVICE_NAME } = require('@opentelemetry/semantic-conventions');

const { getNodeAutoInstrumentations } = require('@opentelemetry/auto-instrumentations-node');

const sdk = new NodeSDK({

resource: new Resource({

[ATTR_SERVICE_NAME]: process.env.OTEL_SERVICE_NAME || 'my-service',

}),

traceExporter: new OTLPTraceExporter({

url: 'https://otlp.last9.io/v1/traces',

headers: {

'Authorization': 'Basic <your-token>',

},

}),

textMapPropagator: new CloudRunTracePropagator(),

instrumentations: [getNodeAutoInstrumentations()],

});

sdk.start();Start your app with the instrumentation preloaded:

{

"scripts": {

"start": "node --require ./instrumentation.js app.js"

}

}For Cloud Run Functions (2nd gen), use NODE_OPTIONS instead — the functions framework starts before your code runs, so --require via package.json scripts won't catch early instrumentation:

gcloud functions deploy my-function \

--gen2 \

--runtime=nodejs20 \

--region=us-central1 \

--source=. \

--entry-point=myHandler \

--trigger-http \

--set-env-vars="NODE_OPTIONS=--require ./instrumentation.js" \

--set-env-vars="OTEL_EXPORTER_OTLP_ENDPOINT=https://otlp.last9.io"The full working example — including a two-service demo you can deploy directly — is in the Last9 OpenTelemetry Examples repo.

What Changes After Deployment

Before the propagator, your traces look like this:

service-a (GET /api/users)

service-b (GET /api/orders) ← no parent relationshipAfter:

service-a (GET /api/users)

└─> HTTP Client (calling service-b)

└─> service-b (GET /api/orders)

└─> HTTP Client (calling downstream)

└─> downstream-service (POST /api/process)The TraceId was always shared. The propagator restores the hierarchy.

Tradeoffs Worth Knowing

All services in the call chain must use it. The propagator only works end-to-end if every service both injects the backup header on outgoing requests and reads it on incoming ones. A mixed deployment — some services updated, some not — will still produce orphaned spans at the boundary between old and new services.

Gradual rollout is fine. Start with leaf services and work upstream. The fallback to standard W3C extraction means nothing breaks in the interim.

You won't see GCP infrastructure spans in your OTLP backend. This is a direct consequence of not using Google Cloud Trace. The load balancer creates those spans for Google's system, not yours. If you need infrastructure-level visibility alongside application traces, you'd need to export to both — an approach that adds operational complexity and cost most teams don't want.

For most use cases, application-level parent-child relationships are what you actually debug. The load balancer's internal span isn't useful if you can't see your own service spans in context.

The overhead is negligible. Two extra header read/write operations per request. Under 100 bytes of additional payload. Not worth measuring.

Further Reading

If you're working through distributed tracing on Cloud Run, these are useful reference points:

- Implement Distributed Tracing with OpenTelemetry — foundational context on how propagation works across service boundaries

- OpenTelemetry Tracing in Distributed Systems — spans, contexts, and the propagation model explained

- OpenTelemetry Express instrumentation — if you're using Express for your Cloud Run services

- How to Debug OpenTelemetry Pipelines — when traces still look wrong after setup

- GCP Monitoring — broader observability context for GCP workloads

- W3C Trace Context specification — the standard the propagator implements

- GCP Cloud Run distributed tracing docs — Google's own documentation on the load balancer behavior

If you're sending traces to Last9 from Cloud Run, this propagator drops in without changes to your infrastructure. The OTLP endpoint accepts standard traces — once the propagator is in place, parent-child relationships show up correctly in the trace view. Full example code at last9/opentelemetry-examples.