The split that ruins your debugging

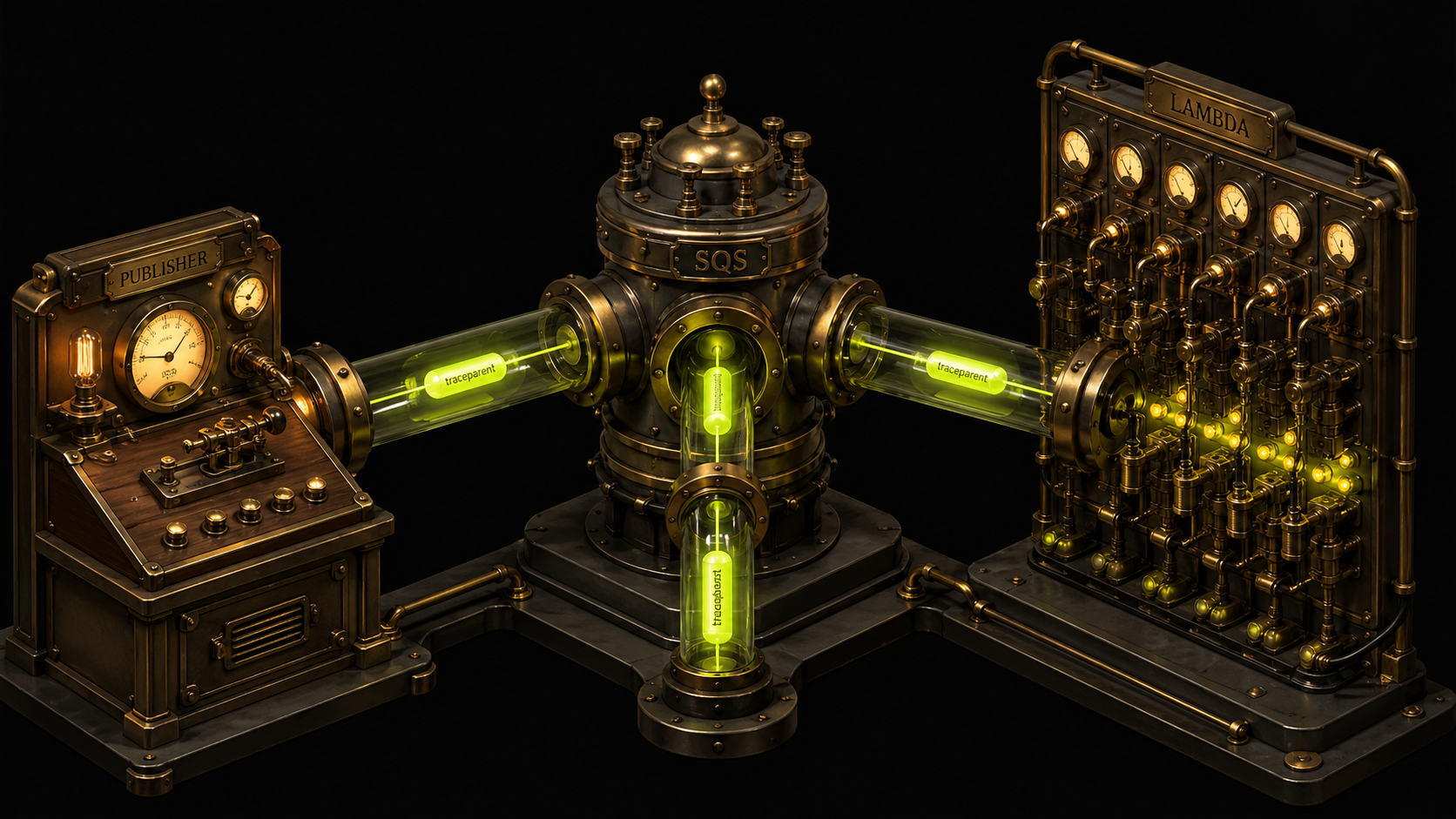

A common production architecture looks like this:

HTTP Request → Publisher Service → SQS Queue → Lambda Consumer → (S3, DynamoDB, …)You instrument both sides with OpenTelemetry. You deploy. You look at your traces.

Publisher trace ends at SendMessage. Lambda trace starts fresh — new trace_id, no parent. A user reports a bad response and you're staring at two disconnected waterfalls with no way to link them.

This is not a bug or a misconfiguration. It's the expected behavior. SQS is an asynchronous boundary and OpenTelemetry's auto-instrumentation does not cross it automatically. Unlike HTTP — where the SDK injects traceparent into request headers and the receiving side extracts it automatically — SQS has no built-in mechanism for trace context. You have to carry it yourself.

This post covers exactly how to do that in Python: inject on the producer side, extract on the consumer side, handle the Lambda ESM format difference that catches everyone off guard the first time, and end up with a single unified trace waterfall across two services.

If you're just getting started with Lambda and OpenTelemetry, Instrumenting AWS Lambda Functions with OpenTelemetry covers the baseline setup. This post assumes you have that in place and want to close the cross-service gap.

Why SQS doesn't propagate context automatically

HTTP instrumentation works because the protocol has headers. The OTel SDK patches your HTTP client to inject traceparent before the request leaves, and patches your server framework to extract it on arrival. Both sides share a transport-level field designed for exactly this.

SQS has no equivalent. A message travels through the queue as a body (JSON or text) plus optional MessageAttributes — key-value pairs attached to the message. The OTel SDK doesn't know about your SQS calls. It instruments boto3 at a level that creates spans for the API call itself, but doesn't inject trace context into the message payload.

For a deeper look at how W3C trace context propagation works across service boundaries, see Implement Distributed Tracing with OpenTelemetry.

SQS MessageAttributes are the right transport for trace context — they're preserved end-to-end, they don't mix with the business payload, and they support arbitrary string values. The fix is manual inject/extract, ~20 lines of code, using the same OTel propagator API the SDK uses internally for HTTP.

Architecture

┌──────────────────┐ ┌─────────┐ ┌──────────────────┐

│ Publisher Service │────▶│ SQS │────▶│ Lambda Consumer │

│ (Flask/HTTP) │ │ Queue │ │ (ESM trigger) │

└──────────────────┘ └─────────┘ └──────────────────┘

│ │ │

traceparent MessageAttr extract from

injected into carries the messageAttributes

MessageAttributes trace context → create child spanThe W3C traceparent value looks like 00-{traceId}-{spanId}-01. You write it into a SQS MessageAttribute with DataType: String. SQS passes it through untouched. The Lambda consumer reads it back and uses it to link its spans to the producer's trace.

This is the same pattern used for Kafka — if you're running message queues on both, Kafka with OpenTelemetry: Distributed Tracing Guide has the equivalent implementation.

Producer: injecting trace context

The Flask publisher creates a span for the SQS send, then uses inject() to write the current trace context into a carrier dict. That dict becomes the SQS MessageAttributes.

If you're not already instrumenting your Flask app, OpenTelemetry with Flask: A Comprehensive Guide covers the baseline setup.

from opentelemetry import trace

from opentelemetry.propagate import inject

tracer = trace.get_tracer(__name__)

def inject_trace_context() -> dict:

"""Convert current OTel context into SQS MessageAttributes."""

carrier = {}

inject(carrier) # writes traceparent (and tracestate if set) into carrier

return {

key: {"DataType": "String", "StringValue": value}

for key, value in carrier.items()

}

def send_message(payload: dict) -> dict:

with tracer.start_as_current_span(

"send_to_sqs",

kind=trace.SpanKind.PRODUCER,

attributes={

"messaging.system": "aws_sqs",

"messaging.destination.name": "my-queue",

"messaging.operation.type": "publish",

},

) as span:

trace_attrs = inject_trace_context()

response = sqs.send_message(

QueueUrl=queue_url,

MessageBody=json.dumps(payload),

MessageAttributes=trace_attrs,

)

span.set_attribute("messaging.message.id", response["MessageId"])

return responseAfter this call, the SQS message carries:

{

"Body": "{\"action\": \"process\"}",

"MessageAttributes": {

"traceparent": {

"DataType": "String",

"StringValue": "00-7d2e75097124454585a0319f10ad0e84-5aa11db539f9feed-01"

}

}

}A few things worth noting:

inject(carrier)writes bothtraceparentandtracestate(if set). Both go into MessageAttributes.- SQS allows up to 10 MessageAttributes per message. Trace context uses 1–2 of those slots.

- Use

SpanKind.PRODUCERfor the send span. This follows the OTel Messaging Semantic Conventions and affects how the span is rendered in waterfall views. For a breakdown of span kinds and what they mean, see OpenTelemetry Spans Explained. - The span must be active when

inject()is called.start_as_current_spanas a context manager handles this automatically.

Consumer: the ESM format gotcha

When Lambda is triggered by SQS via Event Source Mapping (ESM), there's a casing difference in the MessageAttributes that silently breaks context extraction for everyone the first time.

The SQS SDK ReceiveMessage returns:

{"traceparent": {"StringValue": "00-...", "DataType": "String"}}Lambda ESM delivers:

{"traceparent": {"stringValue": "00-...", "dataType": "String"}}The attribute names are camelCase in the Lambda event, not PascalCase. If your extraction code only checks StringValue, it finds nothing, returns an empty context, and your Lambda spans become new root spans — a new trace with no parent. No error, no warning, just disconnected traces.

The fix is to handle both:

from opentelemetry.propagate import extract

from opentelemetry import trace

from opentelemetry.trace import SpanKind

tracer = trace.get_tracer(__name__)

def extract_context_from_sqs_record(record: dict):

"""Extract OTel context from an SQS ESM record.

Lambda ESM delivers lowercase keys (stringValue, dataType).

The SQS SDK delivers PascalCase (StringValue, DataType).

Handle both.

"""

carrier = {}

msg_attrs = record.get("messageAttributes", {})

for key, attr in msg_attrs.items():

value = (

attr.get("stringValue")

if "stringValue" in attr

else attr.get("StringValue")

)

if value is not None:

carrier[key] = value

return extract(carrier)

def process_record(record: dict):

ctx = extract_context_from_sqs_record(record)

queue_arn = record.get("eventSourceARN", "")

queue_name = queue_arn.split(":")[-1] if queue_arn else "unknown"

with tracer.start_as_current_span(

f"process {queue_name}",

context=ctx, # links this span to the producer's trace

kind=SpanKind.CONSUMER,

attributes={

"messaging.system": "aws_sqs",

"messaging.source.name": queue_name,

"messaging.operation.type": "process",

"messaging.message.id": record.get("messageId", ""),

},

) as span:

with tracer.start_as_current_span("business_logic"):

do_work(record)The context=ctx parameter is what links the consumer span to the producer's trace. Without it, start_as_current_span falls back to the current (empty) execution context and creates a new root span. This is the entire mechanism — get the context object from extract(), pass it in.

Batch processing

Lambda ESM delivers up to 10 SQS records per invocation by default (configurable up to 10,000 with batch windows). Each record may carry a different trace context, from a different producer request. Process them individually:

def handler(event, context):

results = []

failures = []

for record in event.get("Records", []):

try:

process_record(record)

results.append(record.get("messageId"))

except Exception as e:

failures.append({"itemIdentifier": record.get("messageId")})

response = {"statusCode": 200, "processed": len(results)}

if failures:

response["batchItemFailures"] = failures

return responseEnable ReportBatchItemFailures on your SQS Event Source Mapping so Lambda retries only the failed records, not the whole batch. Without it, a single failed record causes the entire batch to be reprocessed — or dropped if the retry count is exhausted.

Lambda deployment with ADOT

The Lambda consumer needs to export spans somewhere. The AWS Distro for OpenTelemetry (ADOT) Lambda layer handles this via the AWS_LAMBDA_EXEC_WRAPPER mechanism — it wraps your handler, initializes the SDK, and exports spans after each invocation.

aws lambda update-function-configuration \

--function-name my-consumer \

--layers "arn:aws:lambda:us-east-1:615299751070:layer:AWSOpenTelemetryDistroPython:18" \

--environment "Variables={

AWS_LAMBDA_EXEC_WRAPPER=/opt/otel-instrument,

OTEL_SERVICE_NAME=my-consumer,

OTEL_EXPORTER_OTLP_ENDPOINT=https://otlp.last9.io,

OTEL_EXPORTER_OTLP_HEADERS=authorization=Basic <credentials>,

OTEL_EXPORTER_OTLP_PROTOCOL=http/protobuf,

OTEL_PROPAGATORS=tracecontext,baggage,

OTEL_TRACES_SAMPLER=always_on

}"OTEL_EXPORTER_OTLP_HEADERS contains = signs inside the value. If you're building this programmatically, use jq to avoid shell quoting issues:

ENV_JSON=$(jq -n \

--arg endpoint "$OTEL_EXPORTER_OTLP_ENDPOINT" \

--arg headers "$OTEL_EXPORTER_OTLP_HEADERS" \

'{Variables: {

AWS_LAMBDA_EXEC_WRAPPER: "/opt/otel-instrument",

OTEL_SERVICE_NAME: "my-consumer",

OTEL_EXPORTER_OTLP_ENDPOINT: $endpoint,

OTEL_EXPORTER_OTLP_HEADERS: $headers,

OTEL_EXPORTER_OTLP_PROTOCOL: "http/protobuf",

OTEL_PROPAGATORS: "tracecontext,baggage",

OTEL_TRACES_SAMPLER: "always_on"

}}')

aws lambda update-function-configuration \

--function-name my-consumer \

--environment "$ENV_JSON"OTEL_PROPAGATORS=tracecontext,baggage is required. If this is missing or set to xray, the extract() call won't know how to parse a W3C traceparent and returns an empty context. X-Ray uses a different header format and a different propagator.

Span links vs parent-child: which to use

The approach above creates a parent-child relationship: the consumer span is a child of the producer's send_to_sqs span. This gives you a natural waterfall:

POST /publish publisher-service 61ms

└─ send_to_sqs publisher-service · sqs 35ms

└─ process my-queue lambda-consumer · sqs 54ms

└─ business_logic lambda-consumer 54msThe OTel spec recommends span links instead for batch consumers, because a single Lambda invocation may process messages from multiple producers — multiple parents, one consumer span. Span links let you reference multiple parent spans without collapsing them.

In practice:

| Approach | Works best when | Trade-off |

|---|---|---|

| Parent-child | Batch size 1, or all messages from same trace | Breaks semantically with mixed-origin batches |

| Span links | Mixed-origin batch processing | Many UIs don't render links as clearly as parent-child |

For most SQS → Lambda production patterns, parent-child with per-record extraction gives the best debugging experience. If you're processing high-volume mixed batches and your observability backend renders span links well, switch to links.

Testing locally with LocalStack

Before deploying, validate the full pipeline locally using LocalStack. It emulates SQS (and most other AWS services) without touching a real AWS account.

# Start LocalStack

docker run -d --name localstack -p 4566:4566 localstack/localstack

# Create the queue

aws --endpoint-url=http://localhost:4566 sqs create-queue --queue-name test-queue

# Run the publisher (point SQS client at localhost:4566)

# Run the consumer (read from LocalStack queue, extract context, verify spans)To simulate the Lambda ESM format specifically — the lowercase stringValue casing — write a test that constructs the event object directly:

def test_esm_format_extraction():

record = {

"messageAttributes": {

"traceparent": {

"stringValue": "00-7d2e75097124454585a0319f10ad0e84-5aa11db539f9feed-01",

"dataType": "String"

}

},

"eventSourceARN": "arn:aws:sqs:us-east-1:123456789012:test-queue",

"messageId": "abc-123"

}

ctx = extract_context_from_sqs_record(record)

span_ctx = trace.get_current_span(ctx).get_span_context()

assert span_ctx.trace_id == 0x7d2e75097124454585a0319f10ad0e84This test catches the camelCase issue without needing a running Lambda. Once the pipeline is live, monitor your SQS queue metrics to verify message age, visibility timeouts, and dead-letter rates alongside your traces.

Full working example with Docker Compose, LocalStack, and the publisher/consumer wired end-to-end: github.com/last9/opentelemetry-examples/tree/main/python/aws-sqs-lambda

How other vendors handle this

| Vendor | SQS → Lambda linking | Approach |

|---|---|---|

| Datadog | Automatic (Python, Node.js) | Proprietary _datadog_sampling_priority_v1 MessageAttribute injected by dd-trace |

| New Relic | Manual | Same inject/extract pattern — different propagator format |

| Dynatrace | Automatic with OneAgent | Bytecode injection on Lambda; reads X-Ray headers |

| Last9 (OTel) | Manual (~20 lines) | W3C TraceContext via MessageAttributes — vendor-neutral, any OTLP backend |

Datadog is the most seamless but ties you to their propagation format — interoperability with other systems requires format translation. The OTel approach requires a small amount of code but produces standard W3C traces that work with any backend.

What the unified waterfall looks like

Once both sides are instrumented, Last9 shows a single trace waterfall:

POST /publish publisher-service 61ms

└─ send_to_sqs publisher-service · sqs 35ms

└─ process my-queue lambda-consumer · sqs 54ms

└─ business_logic lambda-consumer 54msFour spans, two services, one trace_id. The Lambda consumer span is a child of send_to_sqs — exactly the relationship you need to debug latency or failures across the async boundary.

When a Lambda invocation fails, you can walk up the trace to the publisher request that triggered it. When the publisher is slow, you can see how far the delay propagates into the consumer. Without context propagation, those two questions require correlating timestamps across separate traces — slow and error-prone.

Summary

The pattern is straightforward once you know the moving parts:

- Producer:

inject(carrier)→ convert carrier to SQS MessageAttribute format → send - Consumer: read

messageAttributes→ handle bothstringValueandStringValue→extract(carrier)→ passcontext=to your span - ADOT layer: set

OTEL_PROPAGATORS=tracecontext,baggage— required for W3C header parsing - Batch processing: one

extract()per record, not per invocation

The ESM casing difference is the single most common reason this silently fails. If your Lambda spans are disconnected root traces and you've done everything else right, check whether your extraction code handles lowercase keys.

References

- Instrumenting AWS Lambda Functions with OpenTelemetry

- Implement Distributed Tracing with OpenTelemetry

- Kafka with OpenTelemetry: Distributed Tracing Guide

- OpenTelemetry with Flask: A Comprehensive Guide

- OpenTelemetry Spans Explained

- Amazon SQS Metrics: Monitor, Debug, and Optimize

- A Quick Guide for OpenTelemetry Python Instrumentation

- OTel Messaging Semantic Conventions

- AWS ADOT Lambda Layer

- Full working example — GitHub